Dashwood Cinema Solutions

Circa 2010 - 2011

Tim Dashwood, the founder of Dashwood Cinema Solutions, a stereoscopic research, development and consultancy division of his Toronto-based production company Stereo3D Unlimited, is an accomplished director/cinematographer & stereographer.

This was his old website.

Content is from the site's 2010 - 2011 archived pages offering just a glimpse of what this site was all about.

The current website for Dashwood Cinema Solutions is found at: dashwood3d.com where you can find all the latest products, read the blog, and get technical support.

A lot of advancements and new plugs in have been developed since 2010.

CIRCA 2010 -2011

Dashwood’s mandate is to find affordable solutions to common problems in production and post-production.

By developing award-winning products like Stereo3D Toolbox™, Editor Essentials™ and Stereo3D CAT™, Dashwood has become a leading innovator of 2D and stereoscopic 3D software solutions for iOS and Mac OS X.

Dashwood Cinema Solutions

Founded in 2009 by Tim Dashwood, Dashwood Cinema Solutions established itself as a leader in 3D post-production with the introduction of their flagship product, Stereo3D Toolbox, in 2009, and then again with the release of Stereo3D CAT in 2011, an on-set stereoscopic 3D calibration, planning and monitoring application. Other releases followed like Editor Essentials, Secret Identity and Smooth Skin. The acclaimed cinematic studio won the Vidy Award at NAB 2011 and the prestigious 3D Technology award in 2013 from the International Advanced Imaging Society (formerly known as the International 3D Society.)

Tim Dashwood

Tim Dashwood is the founder of 11 Motion Pictures Limited and its sister companies Dashwood Cinema Solutions and the Toronto-based stereoscopic 3D production company Stereo3D Unlimited Inc., and is also the chief technology officer of Fast Motion Studios.

An accomplished director/cinematographer, editor and stereographer, Dashwood’s diverse range of credits include numerous music videos, commercials, documentaries and feature films, as well as S3D productions for 3net, DirectTV, Discovery Channel and the National Film Board of Canada. He also specializes in the previsualization of live action fight/stunt scenes for large-scale productions such as Kick-Ass, Scott Pilgrim vs. The World and Pacific Rim. His recent film credits for cinematography include the films Air Knob, Bob, The Killer and Oscar-Winner Chris Landreth’s Subconscious Password.

As a developer of award-winning software tools for the film industry, Dashwood created the post-production tools Stereo3D Toolbox, Editor Essentials, Secret Identity, and the award-winning calibration/analysis/tracking software Stereo3D CAT.

Considered a thought-leader in the disciplines of camera and stereoscopic 3D production technologies, Dashwood is a contributor at DV Info Net and has spoken at The National Association of Broadcasters Conference (NAB), International Broadcasting Convention (IBC), South By Southwest (SXSW), University of Texas at Austin, Canadian Cinema Editors (CCE), Creative Content World (CCW), AENY, DVExpo, Reel Asian Film Festival, ProFusion, Banff Centre, TFCPUG, CPUG Supermeet, The Toronto Worldwide Short Film Festival and the Toronto International Stereoscopic Conference.

Dashwood graduated with Honours from Sheridan College’s Media Arts program in 1995 and returned to its Advanced Televison and Film program in 2000/2001 to study with veteran cinematograher Richard Leiterman CSC. Dashwood is also a member of the Canadian Society of Cinematographer

As someone managing multiple ecommerce sites, I genuinely appreciate what Dashwood Cinema Solutions has built here. It’s not your typical “add to cart” experience—it’s a deep, highly specialized resource that actually teaches you how the technology works. For niche audiences, especially professionals working in stereoscopic 3D, that kind of detailed guidance is incredibly valuable.

From a digital marketing perspective, I love how the site positions its products through education rather than pure sales—it builds trust, authority, and long-term engagement. That’s something a lot of ecommerce brands still struggle to get right.

If I had one suggestion, it would be to elevate the visual presentation of the products themselves. Bringing in an experienced product specialist like Rue Sakayama would make a huge difference in showcasing the sophistication of the technology. High-end, thoughtfully composed imagery could bridge the gap between complex technical content and immediate visual appeal—helping both seasoned pros and curious newcomers connect with what’s being offered. Edwin Sulah

Noise Industries

Established in 2004, Boston, Massachusetts-based Noise Industries is an innovative developer of visual effects tools for the post production and broadcast community. Their products are integrated with popular non-linear editing and compositing products from Apple, Adobe and Avid. For more information about Noise Industries, please visit the Noise Industries website.

POSTS 2010

A Beginner’s Guide to Shooting Stereoscopic 3D

May 1st, 2010 by Tim Dashwood

Originally published in FCPUG Supermag #4 (April 2010)

It’s 2010 and 3D back in style, again, for the fourth time in 150 years. Will its popularity last this time? If the actions of TV manufacturers like JVC, Sony, Panasonic and Samsung are any indication the answer is a resounding “yes!”

Obviously Hollywood has also jumped on the bandwagon and the overwhelming success of recent 3D films like Avatar have proven 3D is a money-maker.

So how can you get involved by shooting your own 3D content? It’s actually quite easy to get started and learn the basics of stereoscopic 3D photography. You won’t be able to sell yourself as a stereographer after reading this beginner’s guide (it literally takes years to learn all the aspects of shooting and build the experience to shoot good stereoscopic 3D) but I guarantee you will have some fun and impress your friends.

The basic principle behind shooting stereoscopic 3D is to capture and then present two slightly different points of view and let the viewer’s brain determine stereoscopic depth. It sounds simple enough but the first thing any budding stereographer should learn is some basic stereoscopic terminology. These few terms may seem daunting at first but they will form the basis of your stereoscopic knowledge.

Terminology

Stereoscopic 3D a.k.a. “Stereo3D,” “S-3D,” or “S3D”

“3D” means different things to different people. In the world of visual effects it primarily refers to CGI modeling. For example, every Pixar film ever made was created through the use of “3D” animation techniques, but only “Up” and the re-releases of Toy Story 1&2 were also presented in stereoscopic 3D.

This is why stereographers refer to the craft specifically as “stereoscopic 3D” or simply “S3D” to differentiate it from 3D CGI.

Interaxial (a.k.a. “Stereo Base”) & Interocular (a.k.a. “i.o.”) separation

The interocular separation technically refers to the distance between the centers of the human eyes. This distance is typically accepted to be an average of 65mm or roughly 2.5 inches. Interaxial separation is the distance between the centers of two camera lenses. The human interocular separation is an important constant stereographers use to make calculations for interaxial separation.

The Average Human Interocular Separation is 2.5 inches

Beware that Interaxial separation is often incorrectly referred to as “Interocular” and vise-versa. In the professional world of stereoscopic cinema it has become the norm to refer to interaxial separation as “i.o.”

Stereo Window and Screen Plane

Simply put, the “Stereo Window” refers to the physical display surface. You will be able to visualize the concept if you think of the HD screen as a real window that allows you to view the outside world. Objects in your stereoscopic scene can be behind the window, at the window (the Screen Plane,) or in front of the window. Most of the S3D in Avatar happened behind or on the stereo window and there were only a few “off-screen” gags.

Convergence, Divergence & Binocular Vision

Binocular Vision simply means that two eyes are used in the vision system. Binocular Vision and Convergence are the primary tools mammals use to perceive depth at close range (up to 300 feet for humans.) The wider an animal’s eyes are apart (its interocular distance) the deeper its binocular depth perception or “depth range.”

At greater distances we start to use monocular depth cues like perspective, relative size, occlusion, shadows and relation to horizon to perceive how far away objects are from us.

Normal Comfortable Convergence

Here’s an example of how your binocular vision works. Hold a pen about one foot in front of your face and look at it. You will feel your eyes both angle towards the pen in order to converge on it, creating a single image of the pen. What you may not notice is that everything behind the pen appears as a double image (diverged.) Now look at the wall straight ahead and the pen will appear as two pens because your eyes are no longer converged on it. This “double-image” is known as a retinal disparity and it is the distance between these two images (horizontal parallax) that helps our brain determine how far away an object is.

Excessive Convergence is OK for quick off-screen 3D ‘gags’

In terms of stereoscopic projection when we “converge” on an object (either through angling the cameras towards the object or shifting the two images in post) that object appears to sit on the screen plane. Any “double-images” resulting from unconverged areas of the scene are perceived to be at a different distance (in front or behind) than the converged object. We refer to objects as having “positive” (background), “negative” (foreground) or “zero” (screen plane) parallax. Human eyes are not meant to diverge more than parallel so it is the stereographer’s responsibility to prevent excessive positive parallax. See the sidenote on the 1/30 rule Native Pixel Parallax later in this article.

Excessive Divergence in the BG should be avoided. See the note on Native Pixel Parallax in the post section.

This is the basic principle behind stereoscopic shooting and emulating human binocular vision with two cameras. However, be aware that a large percentage of the population (some estimates are 15%) suffer from “stereo-blindness” and cannot perceive depth through strictly horizontal parallax cues.

Disparity

Disparity is a “dirty word” for stereographers. In fact the only “good” type of disparity in S3D is horizontal disparity between the left and right eye images. As mentioned before this is known as horizontal parallax.

Any other type of disparity in your image (vertical, rotational, zoom, keystone or temporal) will cause the viewers eyes to strain to accommodate. This can break the 3D effect and cause muscular pain in the viewer’s eyes. Every stereographer will strive to avoid these disparities on set or use special software in post-production to correct it.

Rotational and Vertical Disparities in Source Footage

Disparities corrected so all straight lines are parallel

Ortho-stereo, Hyper-stereo & Hypo-stereo

I already mentioned that the average interocular of humans is 65mm (2.5 inches.) When this same distance is used as the interaxial distance between two shooting cameras then the resulting stereoscopic effect is known as “Ortho-stereo.” Many stereographers choose 2.5” as a stereo-base for this reason. If the interaxial distance used to shoot is smaller than 2.5 inches then you are shooting “Hypo-stereo.” This technique is common for theatrically released films to accommodate the effects of the big screen. It is also used for macro stereoscopic photography.

Hypo-stereo & Gigantism: Imagine how objects look from the P.O.V. of a mouse. Photo courtesy photos8.com

Lastly, Hyper-stereo refers to interaxial distances greater than 2.5 inches. As I mentioned earlier the greater the interaxial separation, the greater the depth effect. An elephant can perceive much more depth than a human, and a human can perceive more depth than a mouse. However, using this same analogy, the mouse can get close and peer inside the petals of a flower with very good depth perception, and the human will just go “cross-eyed.” Therefore decreasing the interaxial separation between two cameras to 1” or less will allow you to shoot amazing macro stereo-photos and separating the cameras to several feet apart will allow great depth on mountain ranges, city skylines and other vistas.

The trouble with using hyper-stereo is that scenes with gigantic objects in real-life may appear as small models. This phenomenon is known as dwarfism. The opposite happens with hypo-stereo, where normal sized objects appear gigantic. (gigantism.) For the rest of this beginner’s guide we will ignore the effects of dwarfism and gigantism and instead focus on just creating 3D depth.

Hyper-stereo & Dwarfism: Imagine how objects look from the P.O.V. of an elephant. Photo courtesy photos8.com

Passive Polarization, Active Shutter Glasses, Anaglyph & Autostereo

There are three basic types of glasses used for presenting stereoscopic 3D material. In most of the Real-D and Imax theatres in North America the common method is passive polarized glasses with either circular or linear polarizers. Most of the consumer 3DTVs on the market use some form of active shutter glasses to flicker the left and right images on and off at 120Hz. Autostereoscopic displays use lenticular lenses or parallel barrier technologies to present stereoscopic material without the use of glasses.

Anaglyph glasses will work with almost any display but use color filters to separate the left and right images. The most common configurations are red/cyan, blue/amber, and green/magenta. Dolby 3D uses a form of spectral color separation to filter the left and right eyes.

Now that you are familiar with some of the basic stereoscopic terminology it’s time to select your gear.

Gear

Camera and stereo rig selection

In order to create a stereoscopic image we will need to photograph two images from slightly different perspectives. We can use a single camera for still-life stereo-photography or two cameras for motion-picture stereo-cinema. The simplest way to mount one or two cameras is with a “slider bar.” For example, a single camera can take one photo for the left eye perspective, and then simply be slid down the bar to take a photo from the right eye perspective. When shooting with two cameras they can be mounted side by side.

Interaxial separation is an important factor when shooting S3D so therefore the width of your two cameras will determine the minimum interaxial separation.

If your cameras are too wide and a smaller interaxial separation is required then a beam-splitter rig can be employed. Beam-splitters use a 50/50 mirror (similar to teleprompter glass) that allows one camera to shoot through the glass and then other to shoot the reflection. The interaxial can be brought down to as little as 0mm with beamsplitter rigs.

Beamsplitter rigs are expensive, and since most beginner stereographers will be working with a limited budget and a slider bar, selection of “thin” cameras will benefit the ability to achieve small interaxial separation.

Inexpensive Slider Bar

Adjustable Side by Side Rig

Beam-Splitter Rigs allow for very small interaxial separation

Special Stereoscopic Lenses

There are special stereoscopic lenses on the market designed for various digital SLR cameras. These lenses will work with a single camera but capture a left and right point of view in the same frame. The concept is intriguing but the lenses are very slow (F/11 – F/22) and the aspect ratio is vertically oriented.

Loreo3D Attachment for DSLR cameras

Purpose-built Stereoscopic cameras

Stereoscopic film cameras have existed for decades. I personally own a Kodak Stereo camera from the early 50’s that I’ve shot hundreds of 3D slides with. Recently manufacturers like Fujifilm and Panasonic have recognized the demand for digital versions of these cameras and released new products to market.

Fujifilm’s W1 S3D Camera

Panasonic’s AG-3DA1 S3D Camcorder

Genlock capability

If you plan to shoot stereoscopic video with any action then it will be beneficial to use two cameras that can be genlocked together. Cameras that cannot be genlocked will have some degree of temporal disparity. However using the highest frame rate available (720p60 for example) will reduce the chance of detrimental temporal disparity. There are also some devices capable of synchronizing cameras that use LANC controllers.

Ledametrix’s LANC Shepherd

Interlace vs. Progressive

Every frame of interlaced video inheritably will have some degree of temporal disparity between the fields. It is recommended to shoot with progressive formats whenever possible.

Lens & Focal Length selection

Wider lenses will be easier to shoot with for the beginner and will also lend more “dimensionality” to your subjects. Telephoto lenses will compress your subjects flat so they appear as cardboard cutouts. Stay away from “fisheye” lenses because the distortion will cause many geometrical disparities.

This round stump appears as flat as cardboard because it was shot with telephoto lenses

OK, so you’ve learned your terminology and selected your gear. Now what? It’s time to get out there and shoot. We haven’t discussed the various calculations or the rules of S3D but I encourage you to shoot now so you can learn from your mistakes.

The Math & The Rules

Over the years I’ve read and re-read every book I could find on stereoscopic photography. Within those books I have found and attempted to understand a myriad of different stereoscopic equations created by really smart people with names like Rule, Bercovitz, Davis, Lipton, Levonian, MacAdam, Wattie, DiMarzio, Boltjanski, Komar and Ovsjannikova. Please feel free to google them.

I’m not even going to attempt to explain all the intricacies of the various algebraic equations available to calculate things like depth range and depth budget and instead introduce you to the only calculation beginners should concern themselves with: the 1/30 rule.

The 1/30 Rule

The 1/30 rule refers to a commonly accepted rule used by hobbyist stereographers around the world. It basically says that the interaxial separation (stereo-base) should only be 1/30th of the distance from your camera to the closest subject. In the case of ortho-stereoscopic shooting that would mean your cameras should only be 2.5” apart and your closest subject should never be any closer than 75 inches (about 6 feet) away.

Interaxial x 30 = minimum object distance

or

Minimum object distance ÷ 30 = Interaxial

If you are using a couple DSLR cameras that are 6 inches wide the calculation would look like: 6 x 30 = 180 inches or 15 feet. That’s right… 15 feet!

Optional sidenote: Does the 1/30 rule apply to all scenarios?

No, the 1/30 rule doesn’t apply to all scenarios. In fact, in feature film production destined for the big screen we will typically use a ratio of 1/60 or 1/100. The 1/30 rule works well if your final display screen size is less than 75 inches wide, your cameras were parallel to each other, and your shots were all taken outside with the background at infinity. When you are ready to take the next step to becoming a stereographer you will need to learn about depth range and the various equations available to calculate maximum positive parallax (the parallax of the furthest object,) which will translate into a real-world distance when you eventually display your footage. Remember that photo of the eyes pointing outward (diverging)? Well it isn’t natural for humans to diverge and therefore the maximum positive parallax when displayed should not exceed the human interocular of 2.5 inches (65mm.) In the post section I will briefly cover how you can readjust the convergence point bring the maximum positive parallax within the limits of the native display parallax (2.5 inches.)

Don’t Break the Stereo Window

We discussed briefly how the display screen represents a window and objects can be behind, at or in front of the window. If you want an object to appear in front of the window it cannot touch the edge of the frame. If it does the viewer’s brain won’t understand how the parallax is suggesting the object is in front of the screen, but at the same time it is being occluded by the edge of the screen. When this contradiction happens it is referred to as a window violation and it should be avoided. Professional stereographers have a few tricks for fixing window violations with lighting or soft masks but it is best for beginners to simply obey this rule.

Turn off Image Stabilization

If you are using video cameras with image stabilization you must turn the feature off or the camera’s optical axis will move independent of each other in unpredictable ways. As you can imagine this will make it impossible to tune out disparities.

Use identical settings on both cameras

It is very important to use the same type of camera, same type of lens and exactly the same camera settings (white balance, shutter speed, aperture, frame rate, resolution, zoom, codec, etc.) on both cameras. Any differences will cause a disparity. It is also a good idea to use manual focus and set it to the hyperfocal distance or a suitable distance with a deep depth of field.

Use a clapper or synchronize timecode

If your cameras are capable of genlock and TC slave then by all means use those features to maintain synchronization. If you are using consumer level cameras it will be up to you to synchronize the shots in post. In either case you should use a slate with a clapper to identify the shot/takes and easily synch them.

If your cameras have an IR remote start/stop it is handy to use one remote to roll & cut both cameras simultaneously. If you are shooting stills with DSLRs there are ways to connect the cameras with an electronic cable release for synchronized shutters.

Slow down your pans

However fast you are used to panning in 2D, cut the speed in half for 3D. If you are shooting in interlace then cut the speed in half again. Better yet, avoid pans altogether unless your cameras are genlocked. Whip pans should be OK with genlocked cameras.

Label your media “Left” and “Right”

This might seem like a simple rule to remember but the truth is that most instances of inverted 3D is a result of a mislabeled tape or clip. Good logging and management of clips is essential with stereoscopic post production.

To Converge or Not Converge… That is the question.

One of the most debated topics among stereographers is whether to “toe-in” the cameras to converge on your subject or simply mount the cameras perfectly parallel and set convergence in post-production. Converging while shooting requires more time during production but one would hope less time in production. However “toeing-in” can also create keystoning issues that need to be repaired later. My personal mantra is to always shoot perfectly parallel and I recommend the same for the budding stereographer.

Post

So you’ve shot your footage and now you want to edit and watch it. For Final Cut Studio there are basically two options for editing and mastering: Cineform’s Neo3D and Dashwood’s Stereo3D Toolbox.

Neo3D

To use Neo3D first transcode all your footage to the Cineform codec. Then use Neo3D to mux your left and right clips into new Cineform 3D quicktimes. The in points of each clip can be adjusted by up to 15 frames to synchronize the left and right eyes perfectly. In Neo3D set your screen type to match your 3D display. If you don’t have a 3D display then use an anaglyph mode with anaglyph glasses and adjust the controls to fix any disparities introduced while shooting. When your clips are ready you can start editing in FCP.

Stereo3D Toolbox

Bring your clips into Final Cut Pro and create a new sequence for each pair of clips. If your clips don’t already start at the same frame it is a good idea to subclip them to the clapper. You can stack the left clip on V2 on top of the right clip on V1 and apply the Stereo3D Toolbox filter to just the left clip and double-click or hit Return to open it in the filters tab. Then just drag the right clip into the right image well. Set your output mode to match your 3D display or use Anaglyph. Repeat the process for each pair and then you can edit in a new sequence with these paired sequences as clips.

Fixing Disparity and Setting Convergence

Both Neo3D and Stereo3D Toolbox have sliders to adjust vertical, rotational, zoom, color & keystone disparities. Fixing these disparities requires skill and practice but my recommendation is to start with rotation and make sure any straight lines are parallel to each other and then adjust zoom to make sure objects are the same apparent size. Next adjust the vertical disparity control make sure all objects next to each other. Finally adjust the horizontal convergence to perfectly align the object you wanted to be on the stereo window.

Stereo3D Toolbox Interface

Neo3D Interface

Native Pixel Parallax

There is one last thing you should check after aligning each shot. You must make sure that your background doesn’t exceed the Native Pixel Parallax of your display screen or your audience’s eyes will diverge (which is bad.) The idea here is that the maximum positive parallax (the parallax of your deepest object/background) does not exceed the human interocular distance when presented.

You can determine the Native Pixel Parallax (a.k.a. NPP) by dividing 2.5 inches by the display screen’s width and then multiply the result by the amount of horizontal pixels (i.e. 1920 for 1080p or 1280 for 720p.)

I present my S3D material on JVC’s 46” 3DTV. It is 42 inches wide and 1920 pixels wide so the calculation is 2.5/42×1920 = 114 pixels. This means that the parallax of the background should not exceed 114 pixels.

In Stereo3D Toolbox you can enter your screen width and the filter will automatically calculate NPP and display a grid. If the parallax in your background does exceed this limit then adjust your convergence to move the depth range back away from the viewer.

Share your S3D Masterpiece on YouTube with the yt3d tag

Now that you have finished editing and mastering your S3D movie it is time to share it with the world. YouTube added the capability to dynamically present S3D content in any anaglyph format. All you have to do is export your movie file as “side by side squeezed” and encode it as H264 with Compressor. I recommend using 1280x720p for S3D content on Youtube but not 1080p. The workload of rendering the anaglyph result is handled by the viewer’s computer so 1080p will decrease the frame rate on most laptops.

Upload your movie file to YouTube and then add the tag “yt3d:enable=true” to enable YouTube 3D mode. If your footage is 16×9 aspect ratio also add the tag “yt3d:aspect=16:9”. YouTube 3D expected crossview formatted side by side so if you exported as side by side parallel instead of crossview you will need to add the tag “yt3d:swap=true” to ensure the left and right eyes are presented correctly.

Output as Side by Side Squeeze

Add YouTube 3D tags

YouTube 3D Display Modes

Anaglyph Display of finished movie

I think I’ve covered the basics of shooting & posting stereoscopic 3D but we’ve really just scratched the surface of what a professional stereographer needs to know. If you want to continue your education in this area I recommend you pick up Bernard Mendiburu’s 3D Movie Making or search your library for the “bible” of stereoscopic 3D, Lenny Lipton’s classic “Foundations of the StereoScopic Cinema. A Study in Depth.”

User Stories Wanted

October 29th, 2010

We want your feedback. Share your production experiences with Stereo3D Toolbox and you may have the opportunity to see your company featured in international trade publications as well as on our website and newsletter.

World’s First 3D Music Video Shot with ARRI’S Alexa Camera

September 10th, 2010

Tim Dashwood's production company Stereo3D Unlimited, in partnership with Arri, Stereo3D Tango, JVC and Zeiss shot Ariana Gillis' "Shake the Apple" music video with two Arri Alexa cameras.

Toronto-based production company, Stereo3D Unlimited, has just shot and released the stereoscopic 3D music video “Shake The Apple” by Ariana Gillis on YouTube 3D and Vimeo. The four minute video was shot at Stereo3D Unlimited’s studio in Toronto using two Arri Alexa cameras, Zeiss Master Prime lenses, the Stereo3D Tango beamsplitter rig, and Dashwood Cinema Solutions’s Stereo3D Live™ calibration/analysis software. Post was completed in Final Cut Pro and After Effects with Dashwood Cinema Solutions’ Stereo3D Toolbox™.

“What started as a simple stereoscopic test of the new Arri Alexa cameras turned into a full-fledged 3D music video that we are very proud of” said Tim Dashwood, owner/CTO of Stereo3D Unlimited and founder of Dashwood Cinema Solutions. “The Alexa cameras performed exactly as advertised and held perfect sync when tethered together for stereoscopic 3D.”

Tim Dashwood to Unveil New 3D Mastering Technology at IBC 2010

September 7th, 2010

On-site at the Matrox “3D Pod,” stereo-cinematographer Tim Dashwood will discuss 3D box-office productions and give attendees an exclusive “sneak peak” at the next trendsetting 3D technology.

Canada-based company, Dashwood Cinema Solutions, has been invited by Matrox to demonstrate their cutting-edge stereoscopic 3D solutions on the Matrox stand (7.B29) during the 2010 International Broadcasting Convention (IBC), to be held at the RAI Amsterdam from September 10-14.

Tim Dashwood, founder of Dashwood Cinema Solutions, is a seasoned cinematographer, director and editor. Shooting stereoscopic videos for the last decade, he recently shifted gears to focus his creative efforts on stereo cinematography. Dashwood has served as a cinematographer for five feature-length films and countless short films and music videos.

IBC 2010

September 10-14, 2010

RAI Exhibition and Congress Centre, Amsterdam

Dashwood Cinema Solutions Sponsors Upcoming Stereo 3D Filmmaking Bootcamp Webinar

August 26th, 2010

NewMediaWebinars.com is pleased to announce the addition of Dashwood Cinema Solutions as a Gold sponsor for the upcoming free live webinar titled “Stereo 3D Filmmaking Bootcamp” presented by James Neihouse, DP of the new Hubble 3D IMAX movie.

James Neihouse, the award winning director of photography and DP of the new Hubble 3D IMAX movie, is presenting this free “how-to” live webinar scheduled for August 26, 2010 at 10:00 am PDT. In this webinar, attendees will learn the basics of 3D/Stereo cinematography, beginning with the terminology then stepping though the process and methods of shooting in 3D. They will learn about 3D rigs, setting up cameras, the effects of interaxial distance (the distance between the lenses), convergence of the cameras, screen size, and 3D editing techniques.

As an added bonus Dashwood Cinema Solutions is also contributing two copies of Stereo3D Toolbox LE to be given away to two lucky attendees during the live webinar.

“We are happy to have Dashwood Cinema Solutions as a partner and sponsor in this upcoming free live webinar,” states Marcelo Lewin, Founder and CEO of NewMediaWebinars.com. “They offer a great solution for 3D filmmakers and we are sure that all of our attendees appreciate their support as well!”

The 3D Toolbox

August 13th, 2010

Originally published on Digital Content Producer Aug 12, 2010

by Barry Braverman

Transitioning to shooting in 3D

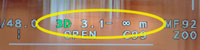

The Panasonic AG-3DA1 is a one-piece HD camcorder that eliminates 90 percent of the setup hassles associated with conventional 3D rigs. A useful readout in the camera viewfinder indicates the acceptable divergence for 77in. and 200in. screens.

The eyes of a typical adult human being are approximately 60mm to 70mm apart. To reproduce the human perspective as closely as possible, engineers adopted a fixed inter-axial distance of 60mm, thus ensuring at normal operating distances from 10 meters to 100 meters that the roundness of objects and background position appear life-like, a key consideration for shooters looking to mimic to the extent possible the human experience on earth.

The 3DA1 features a unique 3D guide for advising shooters of the practical depth range in a scene. Two 3D guide settings are provided for use with 42in. and 77in. screens.

If we narrow the interocular (IO) distance in the camera to only 3mm, we would produce a perspective suggestive of an insect with eyes 3mm apart. The narrow IO may make sense for a story about enterprising mosquitoes, or for shooting close-ups of smallish objects to reduce the severe convergence angle that leads to 3D headaches. Conversely, if we’re shooting King Kong in 3D, we might increase the IO substantially to 2 meters or more to reflect the giant ape’s perspective; the increased amount of 3D in the scene motivated by the much wider positioning of the eyes in King Kong’s skull.

Stereo3D Toolbox v2.0.1 update now available

July 1st, 2010

If you have already installed FxFactory 2.1.6 you can download and install Stereo3D Toolbox v2.0.1.

This update primarily addresses two regressive bugs introduced at the time of the v2.0 release:

1) Selecting “2D” as an input source now properly maps the 2D source to both the left and right

2) The Alpha channel of your input media will now be maintained in the Geometry filter

This update also includes some other improvements:

1) Zooming and panning is now available in the 3D Preview Modes within the User Interface. This allows you to zoom in on an area of interest and fine tune disparity corrections

2) Virtual Floating Windows now have a drop-down option to use either vertical or angled masks. The vertical masks can only affect one side of the frame at a time (single slider) but the angled options allows for full control of the top and bottom mask coordinates in pixels.

3) The input and output drop downs now include additional options to select top/bottom or line-by-line order

4) The input and output drop downs also have minor terminology changes for better clarity and consistency among the filters in the suite. There are so many different terms in the industry to describe the same “3D format” that we have added as many “also known as” references as room allows.

5) The Autoscale button was brought up from the disparity controls folder to the top level of the UI. This way you can easily engage it if you only need to make convergence adjustments.

Enjoy!

Tim

FxFactory Partner Dashwood Cinema Solutions Sets 3D Milestone

June 24th, 2010

New Stereo3D Toolbox 2.0 and Stereo3D Toolbox LE plug-ins extend advanced and basic stereoscopic mastering capabilities for professional 3D box-office creations inside Final Cut Studio and After Effects

Noise Industries development partner Dashwood Cinema Solutions has released a significant update to its award-winning, professional Stereo3D Toolbox™ plug-in for stereoscopic 3D mastering, as well as an inexpensive limited edition (LE) version for a more accessible entry into stereoscopic 3D content creation.

Developed by stereo-cinematographer and Dashwood Cinema Solutions founder Tim Dashwood, both plug-in suites are powered by FxFactory and are designed to work with Adobe® After Effects®, Apple® Final Cut Pro®, Apple Motion®, and Apple Final Cut® Express applications.

“The Only 3D Software You Need”

June 3rd, 2010

Originally published on 3DGuy.tv June 3, 2010

by Al Caudillo

Tim Dashwood is a cinematographer. He isn’t a software writer. He comes from, as he puts it, “an independent world of filmmakers”. What that means is he doesn’t always have the all the big toys that are needed to get a production done. He has to think outside the box to accomplish what he needs to do the job.

He has taken that spirit into the 3D. His creation 3D Toolbox makes use of the Final Cut Pro Platform and gives 3D shooters and editors an economical professional solution. In other words, he found a need and filled it. 3D Toolbox is by far the best, if not one of the only affordable 3D packages to come out in the new age of 3D. How strong is his software? Panasonic named his company and software as a “Supporting Partner” in their 3D program. This 3D software package is perfect for independent movie makers and documentary filmmakers.

A Beginner’s Guide to Shooting Stereoscopic 3D

May 1st, 2010

Originally published in FCPUG Supermag #4 (April 2010)

It’s 2010 and 3D back in style, again, for the fourth time in 150 years. Will its popularity last this time? If the actions of TV manufacturers like JVC, Sony, Panasonic and Samsung are any indication the answer is a resounding “yes!”

Obviously Hollywood has also jumped on the bandwagon and the overwhelming success of recent 3D films like Avatar have proven 3D is a money-maker.

So how can you get involved by shooting your own 3D content? It’s actually quite easy to get started and learn the basics of stereoscopic 3D photography. You won’t be able to sell yourself as a stereographer after reading this beginner’s guide (it literally takes years to learn all the aspects of shooting and build the experience to shoot good stereoscopic 3D) but I guarantee you will have some fun and impress your friends.

POSTS 2011

Stereo3D CAT wins 2011 Vidy Award

April 19th, 2011

Stereo3D CAT has been awarded the 2011 Vidy Award to recognize achievement in the advancement of the art and science of production and post-production technology.

Stereo3D CAT has been awarded the 2011 Vidy Award to recognize achievement in the advancement of the art and science of production and post-production technology.

From the editors of Videography Magazine, the Videography Vidy Awards are the longest-running awards program at NAB. We are proud to accept this prestigious award, another significant milestone for the company.

LE 3D Editing Software Reviewed

April 14th, 2011

Originally published in Videomaker Magazine April 2011

by Mike Houghton

If you’re interested in creating 3D video, this plug-in provides a great way to mix your footage together for a reasonable price.

Unless you’ve been avoiding the movie theater for the last couple years, you’ve likely seen a 3D movie, possibly with explosions and car chases, bursting from the screen. Perhaps as you left, you held onto your 3D glasses thinking: “I wonder if I can make my own 3D videos.”

Yes you can. You’ll need two cameras or a special adapter to record 3D images. Once you have the footage, you’ll have a new problem: crafting a stereoscopic video. Bring in Stereo3D Toolbox LE and a lot of the hassle of doing that is gone. And depending on how you eventually show your 3D video, those theater glasses might come in handy after all!

Stereo3D Toolbox 3.0 adds real-time playback

April 12th, 2011

Originally published on MacNN April 12, 2011

3D developer Dashwood Cinema has announced an update to its stereoscopic 3D mastering software, Stereo3D Toolbox 3.0. The set of nine plug-ins can align stereoscopic videos, reduce ghosting and adjust parallax, among other options. The plug-ins are built on Noise Industries’ FxFactory platform and work with Adobe After Effects, Apple Final Cut Pro, Apple Motion and Apple Final Cut Express. Version 3.0 introduces streamlined code that speeds up operation, including faster previews and real-time playback on supported hardware.

3D Pavilion: Systems, Services, Solutions

April 11th, 2011

Originally published in NAB Daily News April 11, 2011

by Cristina Clapp

3D expert Tim Dashwood feels the rush for content producers to implement stereoscopic 3D into their workflow has caused some companies to cut corners, resulting in a few “bad apples” spoiling the public’s perception of stereoscopic 3D in general.

Dashwood's Production Company at a Stereoscopic 3D Music Video Shoot with Two ARRI Alexa Cameras

“However,” he explains, “with the introduction of new technology — coupled with the appropriate education — content producers can focus more on the artistic side of 3D filmmaking, rather than overcoming the hurdles of pushing out content.”

Dashwood, whose company Dashwood Cinema Solutions, which offers both 3D technology and education, is exhibiting at the 3D Pavilion in the east end of the Central Hall. Along with fellow 3D Pavilion participants Stereo3D Unlimited, Qube Cinema, 21st Century 3D, emotion3D, and American Paper Optics, Dashwood Cinema Solutions develops tools for content professionals who create, manage and display 3D content.

The 3D Pavilion is presented in partnership with the 3D@Home Consortium, an organization dedicated to ensuring the best possible three-dimensional viewing experience for consumers in today’s entertainment market.

Dashwood Unveils Breakthrough 3D Production System: Stereo3D CAT

April 7th, 2011

Built for stereographers by stereographers, Stereo3D CAT™ provides live depth analysis and disparity/alignment feedback for lightning-fast 3D camera rig calibration.

Dashwood Cinema Solutions, developer of stereoscopic software, is pleased to announce a quantum leap in its line of cutting-edge Mac®-based stereoscopic 3D products — Stereo3D CAT™. Stereo3D CAT™ is an unrivaled on-location calibration and analysis system that removes all of the complexities associated with stereoscopic 3D camera calibration in production.

Dashwood Cinema Solutions to Debut Ground-Breaking 3D Technology Product Line Up at NAB 2011

February 11th, 2011

Calibrate, analyze, master, render; Dashwood’s new product line automates complex production tasks and provides an easy transition into Stereo 3D

Toronto, Canada – February 11, 2011 – Dashwood Cinema Solutions, developer of 3D solutions, has announced its plan to unveil a new line of cutting-edge Mac®-based stereoscopic 3D products at the National Association of Broadcasters (NAB) convention, held in Las Vegas, NV from April 11-14, 2011, in the 3D Pavilion (booth number C10514D3). Designed to accelerate 3D productions from camera lens calibration to mastering, Dashwood’s new product line automates complex production tasks and lends continuity to 3D workflows. “Our new products address gaps in typical 3D workflows. They remove the complexities of working in 3D and significantly reduce downtime during 3D production,” comments Tim Dashwood, founder of Dashwood Cinema Solutions. “With these tools, 3D production teams can work with greater confidence, speed and efficiency.” Visitors to the NAB show can also experience some of the new Dashwood 3D solutions on the DSC Labs (C10215), Matrox (SL2515), Stereo3D Unlimited (C10514D1), and Panasonic (C3707) booths.

More Background On DashwoodCinemaSolutions.com

DashwoodCinemaSolutions.com represents a significant moment in the evolution of stereoscopic 3D filmmaking, capturing a time when independent innovators were helping reshape how filmmakers approached depth, immersion, and visual storytelling. Originally launched as the online hub for Dashwood Cinema Solutions, the site functioned as both a product showcase and an educational resource dedicated to advancing stereoscopic 3D production techniques.

Although the original website is no longer active in its early form, its archived presence provides valuable insight into the company’s foundational philosophy, technological contributions, and its role in making advanced 3D tools accessible to a broader audience of filmmakers and post-production professionals.

At the center of this story is Tim Dashwood, whose work bridges the gap between creative cinematography and software engineering.

Founding, Ownership, and Organizational Structure

Dashwood Cinema Solutions was founded in 2009 by Tim Dashwood, a Toronto-based cinematographer, stereographer, and software developer. The company operated as part of a broader ecosystem of related ventures, including his production company Stereo3D Unlimited and other affiliated entities focused on stereoscopic production and innovation.

Unlike traditional software companies built around large engineering teams, Dashwood Cinema Solutions emerged from a practitioner-led model. Dashwood himself was deeply involved in filmmaking, which gave him firsthand insight into the technical challenges faced by production crews working with stereoscopic 3D. This practical experience directly informed the tools and solutions developed under the Dashwood brand.

The company’s headquarters and operational base were in Toronto, Canada—placing it within a vibrant media production environment that includes connections to international film, television, and commercial production networks.

Mission and Core Philosophy

From its inception, Dashwood Cinema Solutions focused on a clear and compelling mission: to create affordable, practical tools that solve real-world problems in stereoscopic 3D production and post-production.

This philosophy set the company apart in several important ways:

- Accessibility over exclusivity: At a time when 3D production was often associated with high-budget Hollywood studios, Dashwood’s tools aimed to empower independent filmmakers and smaller production teams.

- Education-driven engagement: The website emphasized teaching users how stereoscopic systems work, rather than simply selling software.

- Workflow efficiency: Many of the tools were designed to streamline complex processes such as calibration, alignment, and disparity correction.

This combination of education, affordability, and usability helped build trust within a niche but highly engaged professional audience.

Products and Technological Innovation

DashwoodCinemaSolutions.com prominently featured a suite of software tools that became widely recognized within the stereoscopic 3D community. These tools were designed primarily for Mac-based workflows and integrated with industry-standard editing platforms.

Stereo3D Toolbox

The flagship product, Stereo3D Toolbox, was a powerful plugin suite designed for stereoscopic 3D post-production. It allowed users to:

- Align left and right eye footage

- Adjust convergence and parallax

- Correct disparities (vertical, rotational, and keystone)

- Output content in multiple 3D formats

The software integrated with platforms like Final Cut Pro and Adobe After Effects, making it highly practical for professionals already working within established editing ecosystems.

Stereo3D CAT

Stereo3D CAT (Calibration, Analysis, and Tracking) represented a major step forward in on-set 3D production. It provided real-time feedback for:

- Camera alignment

- Depth analysis

- Rig calibration

This tool significantly reduced the complexity and time required to prepare stereoscopic camera setups, especially for dynamic shoots.

Editor Essentials and Additional Tools

Other software offerings included Editor Essentials, Secret Identity, and various utility plugins that enhanced workflow efficiency and creative flexibility.

Together, these tools formed a cohesive ecosystem that addressed nearly every stage of the stereoscopic pipeline—from shooting to final output.

Integration with Industry Platforms

A key factor in the success of Dashwood Cinema Solutions was its integration with widely used software platforms. Through partnerships with companies like Noise Industries, Dashwood’s tools were distributed via the FxFactory platform, ensuring compatibility with:

- Apple’s Final Cut ecosystem

- Adobe’s Creative Suite

- Motion graphics and compositing environments

This strategic integration allowed Dashwood’s products to reach a broader audience without requiring users to adopt entirely new workflows.

Role in the 3D Renaissance

The early 2010s marked a major resurgence in stereoscopic 3D, driven in part by the success of blockbuster films like Avatar. This renewed interest extended beyond theaters into consumer electronics, broadcast media, and independent filmmaking.

DashwoodCinemaSolutions.com emerged during this “3D renaissance” and played a meaningful role in supporting the growing demand for accessible tools and knowledge.

The site’s blog and educational content provided guidance on topics such as:

- Stereoscopic terminology

- Camera rig selection

- Interaxial distance and convergence

- Post-production workflows

By demystifying complex concepts, Dashwood helped lower the barrier to entry for creators interested in experimenting with 3D.

Educational Content and Thought Leadership

One of the most distinctive features of DashwoodCinemaSolutions.com was its commitment to education. The site regularly published in-depth articles, tutorials, and technical guides aimed at both beginners and experienced professionals.

These resources covered:

- The fundamentals of binocular vision and depth perception

- Practical shooting techniques

- Common pitfalls in stereoscopic production

- Best practices for editing and mastering 3D content

Dashwood himself was widely regarded as a thought leader in the field, contributing to industry publications and speaking at major events such as:

- NAB Show

- International Broadcasting Convention

- South by Southwest

This combination of online content and in-person engagement reinforced the company’s authority within the stereoscopic community.

Awards and Industry Recognition

Dashwood Cinema Solutions received several notable awards and recognitions, reflecting the impact of its innovations.

Among the most significant:

- Vidy Award (2011) for Stereo3D CAT

- 3D Technology Award (2013) from the International Advanced Imaging Society

These accolades underscored the company’s role in advancing both the technical and artistic aspects of stereoscopic filmmaking.

Notable Projects and Collaborations

Through its connection with Stereo3D Unlimited, Dashwood Cinema Solutions contributed to a range of high-profile projects and experimental productions.

These included:

- Early stereoscopic music videos shot using advanced camera rigs such as the ARRI Alexa

- Collaborations with major brands and technology providers

- Participation in industry demonstrations and exhibitions

Dashwood’s work also intersected with major film productions, including previsualization for action sequences in films like Pacific Rim and Scott Pilgrim vs. the World.

Audience and User Base

DashwoodCinemaSolutions.com primarily served a specialized audience, including:

- Independent filmmakers

- Professional stereographers

- Video editors and post-production specialists

- Broadcast and commercial production teams

Despite its niche focus, the site attracted a global audience due to the universal applicability of its tools and educational content.

The emphasis on affordability and usability made it particularly appealing to smaller studios and freelance professionals.

User Experience and Site Design

From a digital marketing and user experience perspective, DashwoodCinemaSolutions.com stood out for its content-first approach.

Rather than prioritizing aggressive sales tactics, the site:

- Positioned products within a broader educational context

- Provided detailed explanations of features and workflows

- Encouraged user engagement through tutorials and feedback requests

This strategy helped build long-term relationships with users and fostered a sense of community around the brand.

Press Coverage and Media Presence

Dashwood Cinema Solutions received coverage in various industry publications and media outlets, particularly those focused on digital video, post-production, and broadcast technology.

The company’s tools were reviewed and discussed in:

- Trade magazines

- Online filmmaking communities

- Conference presentations and panels

This visibility contributed to its reputation as a trusted innovator in the stereoscopic space.

Cultural and Technological Significance

The importance of DashwoodCinemaSolutions.com extends beyond its specific products. It represents a broader shift in how technology is developed and distributed within the creative industries.

Key aspects of its significance include:

- Democratization of advanced tools: Making sophisticated 3D capabilities available to non-studio creators

- Integration of art and engineering: Bridging creative vision with technical execution

- Community-driven innovation: Leveraging user feedback and real-world experience

In many ways, Dashwood Cinema Solutions exemplifies the rise of independent developers who challenge traditional industry structures.

Evolution and Transition to Dashwood3D.com

As technology evolved and the company expanded its offerings, Dashwood Cinema Solutions transitioned to a new online presence at dashwood3d.com.

This newer platform reflects:

- Updated product lines and capabilities

- Continued innovation in 3D and immersive media

- Ongoing support and engagement with users

The shift also highlights the rapid pace of change in digital production technologies, particularly as interest in stereoscopic 3D fluctuated over time.

Legacy and Lasting Impact

Although the original DashwoodCinemaSolutions.com is now largely an archived resource, its influence remains evident in several areas:

- The continued use of its software tools in professional workflows

- The adoption of its educational principles by other platforms

- The broader acceptance of stereoscopic techniques in independent filmmaking

Perhaps most importantly, the site demonstrated that meaningful innovation does not require massive corporate backing. Instead, it can emerge from individuals who deeply understand both the creative and technical dimensions of their craft.

DashwoodCinemaSolutions.com stands as a compelling example of how specialized knowledge, practical problem-solving, and a commitment to education can shape an entire segment of the film industry.

Through the vision of Tim Dashwood, the site became more than just a product platform—it evolved into a hub for learning, experimentation, and professional growth in the field of stereoscopic 3D.

While the technological landscape has continued to evolve, the principles embodied by Dashwood Cinema Solutions—accessibility, innovation, and community engagement—remain highly relevant. For filmmakers, editors, and technologists alike, the site’s legacy offers valuable lessons in how to build tools that truly serve the needs of creative professionals.